IC2E 2017 Tutorial Program

- Tutorial 1:Cloud Computing for Science and Engineering: Scaling Science in the Cloud

- Tutorial 2:Parallelizing Trajectory Stream Analysis on Cloud Platforms

- Tutorial 3:Building Secure Cloud Architectures and Ecosystems Using Patterns

Cloud Computing for Science and Engineering: Scaling Science in the Cloud (revised agenda)

- Dennis Gannon (Indiana University, USA), Ian Foster (University of Chicago and Argonne National Lab), Vani Mandava (Microsoft Research)

- Wednesday, April 5 (full day)

Cloud Computing is becoming an essential tool for research in science and engineering. This tutorial is intended to acquaint the participant with the methods being used by scientists and engineers to conduct interactive scientific discovery using cloud hosted tools and data. The tutorial is in two parts. In the first part the course will cover basics of using the cloud including data storage, virtual machines and containers. Examples from many disciplines will be discussed along with pointers to valuable data collections. Programming examples based on Jupyter Python notebooks will be used to illustrate many aspects of this approach to science. The second half of this tutorial will describe how to solve problems that require scaling computation beyond a single server. The topics covered here will include Kubernetes, Mesos and how scientific apps can be built from microservices as well as parallel cluster technology. We will also study scalable event streaming technology such as Kinesis, Storm and Google’s Dataflow. We will include examples from AWS, Azure and Google as well as OpenStack as implemented in the NSF JetStream cloud.

Part 1. The Cloud and Interactive Scientific Discovery

- 10:30 An Introduction to basic cloud access and operation

- Azure account setup and introduction to Jupyter

- Storage Systems: blob stores including S3, Azure blob storage, OpenStack Swift, SQL and NoSQL storage including Google Big Table, AWS DynamoDB, AWS RDS, Azure Tables

- Hands-on Lab: Blob and Table Storage using the Azure Portal and Jupyter.

- 12:00 Lunch

Part 2. Scaling Science in the Cloud

This section focuses on higher level services in the cloud.- 13:00 Resume

- 13:30 Virtual Machine and Containers

- Compute Infrastructure: Virtual Machines and how to launch them and attach storage. Demos from AWS and JetStream.

- Containers: Docker Demo.

- 14:15 Parallelism in the cloud (discussion and demo)

- Map Reduce

- Spark and Hadoop

- Kubernetes and Mesos and container services

- Microservice concepts and demo

- 15:00 Break

- 15:30 Data Analytics

- Hands-on Lab: Azure with Spark.

- 16:30 Machine learning and event stream analysis (1 hour).

- Survey discussion

- AzureML with Event Hub demo and Hands-on lab.

Prerequisites

No cloud background is assumed for the first half. Some Python programming experience helpful. The second half assume knowledge about the cloud at the level presented in the first half.Biographies of presenters

Dennis Gannon is Professor Emeritus in the School of Informatics and Computing at Indiana University. From 2008 until he retired in 2014 Dennis Gannon was with Microsoft Research, most recently as the Director of Cloud Research Strategy. In this role he helped provide access to Azure cloud computing resources to over 300 projects in the research and education community in the U.S., Europe, Asia, South America and Australia. His previous roles at Microsoft include directing research as a member of the Cloud Computing Research Group and the Extreme Computing Group. From 1985 to 2008 Gannon was with the Department of Computer Science at Indiana University where he was Science Director for the Indiana Pervasive Technology Labs and, for seven years, Chair of the Department of Computer Science. In 2012 he received the President’s Medal for his service to Indiana University. While he was Chair of the Computer Science Department at Indiana University, he chaired the committee that designed the University’s new School of Informatics. For that effort he was given the School’s Hermes Award in 2006. He worked closely with the original TeraGrid project where he helped launch the Science Gateways program.

Ian T. Foster is the Director of the Computation Institute in Chicago, Illinois. He is also a Distinguished Fellow and Senior Scientist in the Mathematics and Computer Science Division at Argonne National Laboratory, and a Professor in the Department of Computer Science at the University of Chicago.

Foster's honors include the Lovelace Medal of the British Computer Society, the Gordon Bell Prize for high-performance computing (2001), and the IEEE Tsutomu Kanai Award (2011). He was elected Fellow of the British Computer Society in 2001, Fellow of the American Association for the Advancement of Science in 2003, and in 2009, a Fellow of the Association for Computing Machinery, who named him the inaugural recipient of the High-Performance Parallel and Distributed Computing (HPDC) Achievement Award in 2012.

Vani Mandava is a Principal Program Manager with Microsoft Research at Redmond with over a decade of experience designing and shipping software projects and features that are in use by millions of users across the world. Her role in the Microsoft Research Outreach team is to lead the Data Science for Research effort, to enable academic researchers and institutions develop technologies that fuel data-intensive scientific research using advanced techniques in data management, data mining, especially leveraging Microsoft Cloud platform through the Azure for Research program. She leads Microsoft Research Academic Outreach North America efforts at University of California, Berkeley and Massachusetts Institute of Technology. She has enabled the adoption of data mining best practices in various v1 products across Microsoft client, server and services in MS-Office, Sharepoint and Online Services (Bing Ads) organizations. She co-authored a book ‘Developing Solutions with Infopath’.

She co-chaired KDD Cup 2013 and partners with many academic and government agencies including NSF supported Big Data Innovation hub effort, a consortia coordinated by top US data scientists and expected to advance data-driven innovation nationally in the US.

Parallelizing Trajectory Stream Analysis on Cloud Platforms

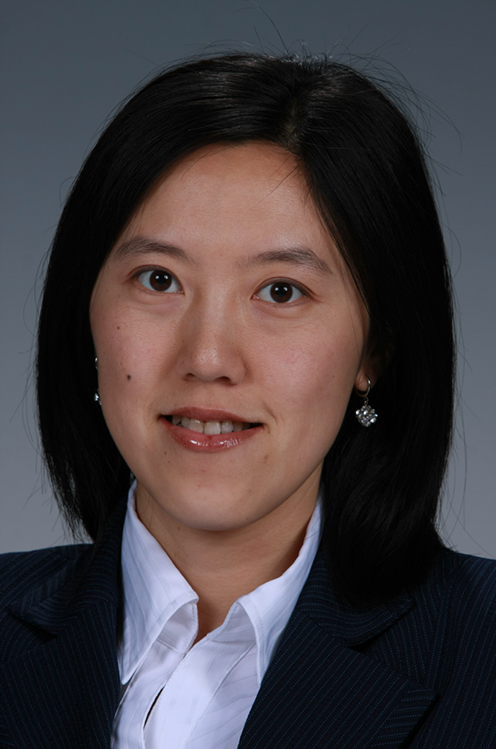

- Yan Liu (Concordia University, Canada)

- Thursday, April 6 (half day)

The advances in location-acquisition technologies have generated massive spatio-temporal trajectory streams, which represent the mobility of a diversity of moving objects over time, such as people, vehicles, and animals. Discovery of traveling companions on trajectory data has many real-world applications. A key character of trajectory streams is large volumes of time-stamped data is constantly generated from diverse and geographically distributed sources.Thus techniques for handling high-speed trajectory streams should scale on distributed cluster computing. The main issues encapsulate three aspects, namely the design of discovery algorithms to represent the continuous trajectory data, the parallel implementation on a cluster, and the optimization techniques. The goal of this tutorial is to provide a practical view of the techniques and challenges of parallelizing the analysis of big trajectory streams.

In this tutorial, we will present the following topics: (1) the discovery algorithms to identify the trends in data trajectories; (2) the parallel programing frameworks for both batch and streaming implementation; and (3) the optimization techniques on data partition and data shuffling. We will demonstrate case studies with public open dataset and a set of open source software frameworks on a fully integrated cluster environment on AWS.

Prerequisites:

This tutorial is self-contained. We will provide a beginner level introduction of programming models of both batch mode and streaming mode data processing (10% beginner level). Then we cover the trajectory analysis algorithms (20% intermediate). This brings the context to the audience to understand the techniques of data partition, parallel execution and (30% intermediate) and the issues of optimization (30% advanced). The deployment on a cloud cluster also introduces a set of technologies widely used for processing big data in the industry (10% intermediate).

Knowledge and experience with distributed programming with Java will be useful, since the demonstration is written in Java programs. Understanding the MapReduce programming model is also of great help to understand the techniques in this tutorial, as they are centered on key/value pairs operations such as data partition, join and aggregation.

Biographies of presenters:

Yan Liu is Associate Professor at the Electrical and Computer Engineering Department, Concordia University, Canada. Her research interests are data stream processing, cloud software architecture, distributed computing, software performance engineering, and adaptive systems. She was a senior scientist at the Pacific Northwest National Laboratory in Washington State where she led R&D projects on data intensive computing for a wide range of domains including smart grid, building energy optimization, chemical imaging, system biology knowledgebase, and climate modeling. She was a senior researcher at National ICT Australia after PhD graduation from The University of Sydney, Australia . She is a member of the IEEE and ACM.

Building Secure Cloud Architectures and Ecosystems Using Patterns

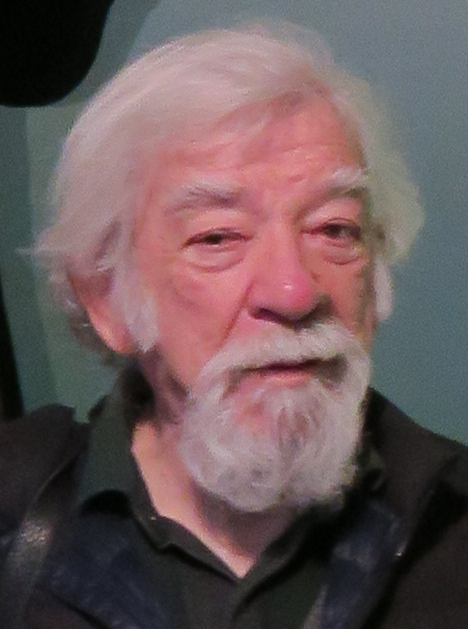

- Eduardo B. Fernandez (Florida Atlantic University, USA)

- Thursday, April 6 (half day)

Patterns combine experience and good practices to develop basic models that can be used to build new systems and to evaluate existing systems. Security patterns join the extensive knowledge accumulated about security with the structure provided by patterns to provide guidelines for secure system requirements, design, and evaluation. We consider the structure and purpose of security patterns, show a variety of security patterns, and illustrate their use in the construction of secure systems. These patterns include among others Authentication, Authorization/Access Control, Firewalls, Secure Broker, Web Services Security, and Cloud Security. We have built a catalog of over 100 security patterns. We introduce Abstract Security patterns (ASPs) which are used in the requirements and analysis stages. We complement these patterns with misuse patterns, which describe how an attack is performed from the point of view of the attacker and how it can be stopped. We integrate patterns in the form of security reference architectures. Reference architectures have not been used much in security and we explore their possibilities. We introduce patterns in a conceptual way, relating them to their purposes and to the functional parts of the architecture. Example architectures include a security cloud reference architecture (SRA) and a secure cloud ecosystem. The use of patterns can provide a holistic view of security, which is a fundamental principle to build secure systems. Patterns can be applied throughout the software lifecycle and provide a good communication tool for the builders of the system. The patterns and reference architectures are shown using UML models and examples are taken from my two books on security patterns as well as from my recent publications. The patterns are put in context; that is, we do not present a disjoint collection of patterns but we present a logical architectural structuring where the patterns are added where needed. In fact, we present a complete methodology to apply the patterns along the system lifecycle to build secure systems and a process to build reference architectures.

Prerequisites

Basic knowledge of UML (diagram reading at least).Biographies of presenters:

Eduardo B. Fernandez (Eduardo Fernandez-Buglioni) is a professor in the Department of Computer Science and Engineering at Florida Atlantic University in Boca Raton, Florida, USA He has published numerous papers on authorization models, object-oriented analysis and design, and security patterns. He has written four books on these subjects, the most recent being a book on security patterns. He has lectured all over the world at both academic and industrial meetings. He has created and taught several graduate and undergraduate courses and industrial tutorials. His current interests include security patterns, cloud computing security, and software architecture. He holds a MS degree in Electrical Engineering from Purdue University and a Ph.D. in Computer Science from UCLA. He is a Senior Member of the IEEE, and a Member of ACM. He is an active consultant for industry, including assignments with IBM, Allied Signal, Motorola, Lucent, Huawei, and others. More details can be found at http://faculty.eng.fau.edu/fernande/